URL-encoded-The default content type for sending simple text data Create and save custom methods and send requests with the following body types: It does not store any personal data.Create and execute any REST, SOAP, and GraphQL queries from within Postman. The cookie is set by the GDPR Cookie Consent plugin and is used to store whether or not user has consented to the use of cookies. The cookie is used to store the user consent for the cookies in the category "Performance".

This cookie is set by GDPR Cookie Consent plugin. The cookie is used to store the user consent for the cookies in the category "Other. The cookies is used to store the user consent for the cookies in the category "Necessary". The cookie is set by GDPR cookie consent to record the user consent for the cookies in the category "Functional". The cookie is used to store the user consent for the cookies in the category "Analytics". These cookies ensure basic functionalities and security features of the website, anonymously. Necessary cookies are absolutely essential for the website to function properly. This post is part of our comprehensive Postman Mini-Course. Always remember to be responsible, ethical, and compliant with the target website’s policies while performing web scraping. With its user-friendly interface and powerful features, you can efficiently extract data from APIs and websites for analysis, research, or application development. Postman is an excellent tool for API testing and a valuable asset for web scraping tasks. Final thoughts on web scraping with Postman Handling edge casesīe prepared for various scenarios, such as handling errors, dealing with CAPTCHAs, or extracting data from websites with complex structures.

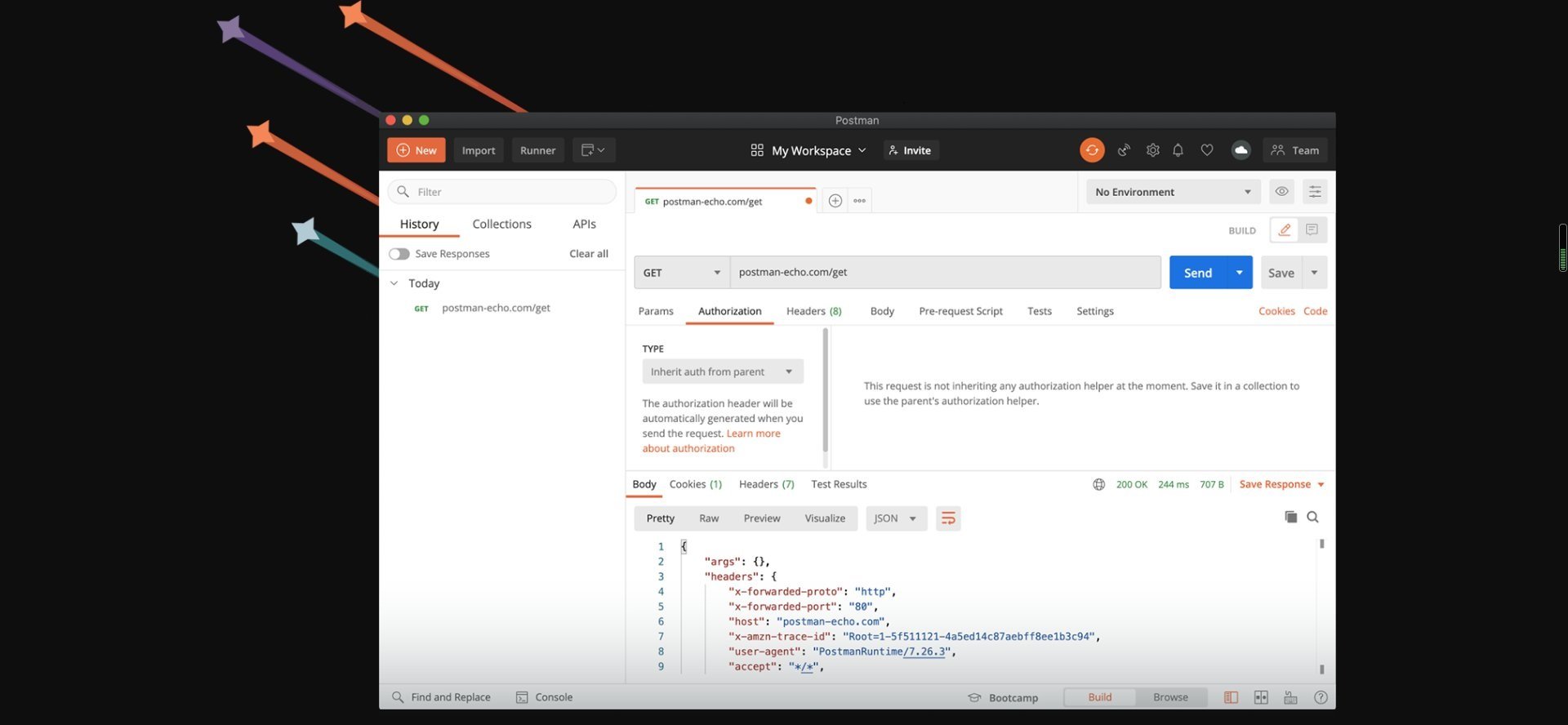

Automation can save time and effort, especially when dealing with large datasets. Utilize Postman’s collection runner or Newman (the command-line version) to automate repetitive scraping tasks. Keep your data well-organized for easy retrieval and analysis. Store the extracted data in a format that suits your needs, such as CSV files, databases, or other data repositories. If you’re unsure about scraping a website, consider contacting the website’s administrators for permission. Respect the website’s terms of service and privacy policies. Legal and ethical considerationsīefore scraping data, check the website’s robots.txt file to see if scraping is allowed. You can add logic to your requests to respect rate limits and implement wait times between consecutive bids. Let’s explore these advanced web scraping techniques in detail: Dealing with rate limitingĪlways be mindful of rate limits set by websites or APIs to avoid overloading their servers. To become a proficient web scraper with Postman, you must consider rate limiting, legal and ethical considerations, data organization, automation, and handling edge cases. Web scraping involves more than just sending HTTP requests and extracting data. Once you have extracted the data, you can save it by clicking the Save Response button in Postman.Īdvanced web scraping techniques with Postman.Use advanced web scraping libraries like Puppeteer to handle JavaScript-generated dynamic content (optional).

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed